Introduction: A New Era of Medicine

Imagine walking into a hospital where your doctor has a powerful assistant—one that never sleeps, never forgets a medical study, and can analyze millions of patient cases in seconds. This isn’t science fiction anymore. Artificial Intelligence is fundamentally reshaping how we diagnose diseases, treat patients, and manage healthcare systems across the globe.

From busy emergency rooms in New York to rural clinics in developing nations, AI technologies are making their presence felt in ways both profound and practical. Radiologists now work alongside algorithms that can spot tumors invisible to the human eye. Hospital administrators use predictive models to anticipate patient surges before they happen. Nurses rely on smart monitoring systems that alert them to deteriorating patients hours before traditional methods would.

Yet with this transformation comes a complex web of questions and concerns. As healthcare systems deploy AI technologies more broadly, data from surveys, market reports, and clinical studies reveal both significant benefits and meaningful risks that stakeholders must carefully navigate. The promise is enormous, but so too are the challenges—from data privacy concerns to the risk of algorithmic bias, from the potential erosion of clinical skills to the ethical dilemmas of machine-made medical decisions.

This article provides a comprehensive, data-oriented overview to help readers understand what’s real, measurable, and credible about AI in healthcare. We’ll explore not just the numbers and statistics, but the human stories behind them—the patients whose lives have been saved, the doctors whose work has been transformed, and the communities grappling with the implications of this technological revolution.

Current Adoption and Market Growth of AI in Healthcare

The integration of AI into healthcare isn’t a distant possibility—it’s happening right now, at an accelerating pace that has surprised even industry insiders. Walk into most major hospitals today, and you’ll find AI systems quietly working in the background, analyzing x-rays, predicting patient outcomes, optimizing staff schedules, and managing supply chains.

The numbers tell a compelling story of rapid adoption and enthusiastic investment:

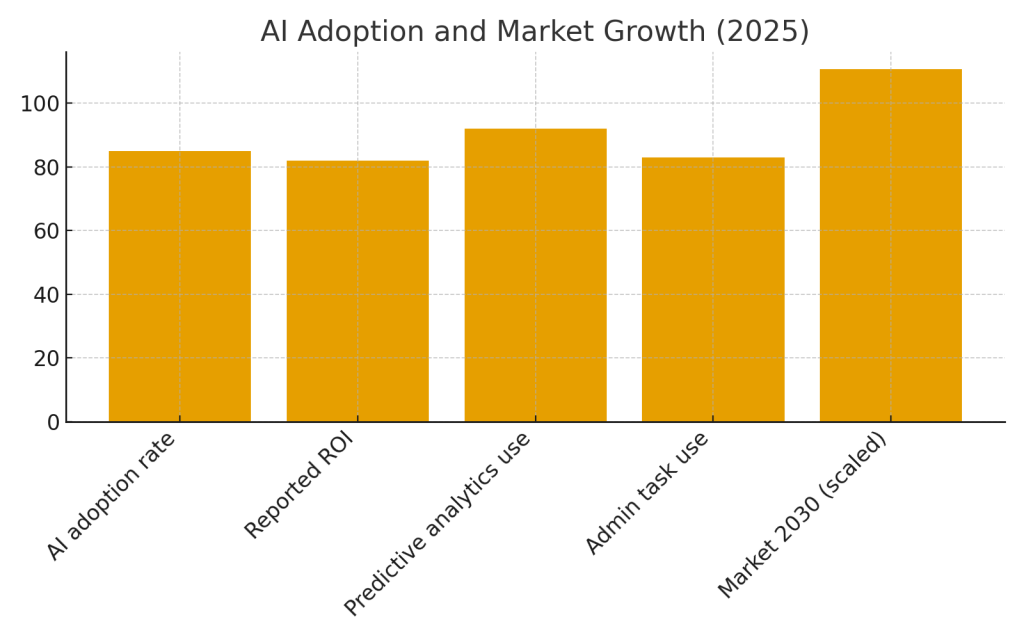

Table 1: AI Adoption and Market Growth (2025)

| Metric | Statistic | Source |

|---|---|---|

| AI adoption rate by healthcare organizations | 85% | ventionteams.com |

| Reported ROI (moderate to high) | 82% | ventionteams.com |

| AI in clinical environments (predictive analytics) | 92% | Master of Code Global |

| AI for administrative tasks | 83% | Master of Code Global |

| Projected global AI healthcare market (2030) | $110.61B | ventionteams.com |

What These Numbers Really Mean

These statistics aren’t just abstract figures—they represent a fundamental shift in healthcare delivery. When 85% of healthcare organizations report active AI usage, it means that whether you’re visiting a community clinic or a specialized cancer center, there’s a good chance AI is playing some role in your care journey.

The 82% reporting moderate to high ROI tells us something equally important: this isn’t just experimental technology anymore. Healthcare leaders—notoriously conservative about adopting new tools—are seeing measurable returns on their AI investments. These returns manifest in various ways: emergency departments that can better predict patient volumes and staff accordingly, radiology departments that can handle more cases without burning out their physicians, and administrative teams that spend less time on paperwork and more time on patient care.

Perhaps most striking is the 92% adoption rate for predictive analytics in clinical environments. This means that in hospitals across the country, algorithms are actively working to predict which patients might develop complications, who might be readmitted after discharge, or which individuals are at risk for sepsis or cardiac events. These aren’t futuristic concepts—they’re daily clinical tools.

By the mid-2020s, the majority of healthcare providers have moved beyond pilot programs and proof-of-concepts. They’re implementing AI at scale, with strong confidence in measurable returns and operational impact. Predictive analytics and workflow automation now lead both clinical and administrative adoption, fundamentally changing how healthcare organizations operate.

The projected market growth to $110.61 billion by 2030 reflects not just optimism but concrete investment and deployment plans across the industry. This expansion is being driven by multiple factors: aging populations in developed nations, chronic disease management needs, healthcare worker shortages, and the continuing pressure to reduce costs while improving outcomes.

Tangible Benefits of AI in Medicine

When we talk about AI’s benefits in healthcare, it’s easy to get lost in abstract promises and vague claims of “better care.” But the reality is far more concrete and measurable. Real hospitals are saving real time, real doctors are making better diagnoses, and real patients are experiencing improved outcomes. Let’s examine these benefits through the lens of hard data and actual implementation stories.

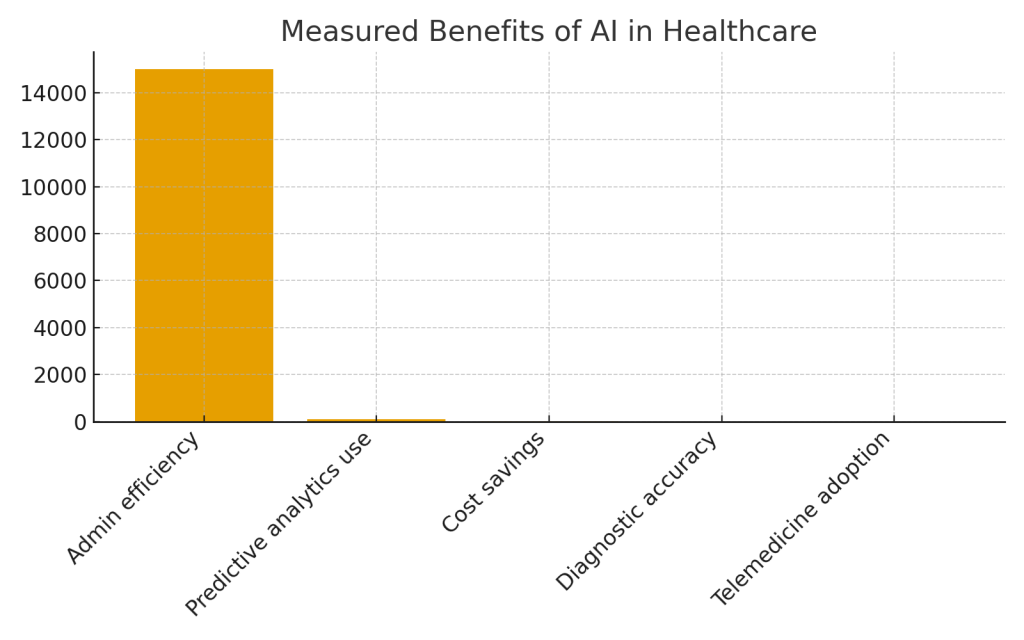

Table 2: Measured Benefits of AI in Healthcare

| Benefit Area | Key Outcome / Impact | Source |

|---|---|---|

| Improved administrative efficiency | Document automation saved ~15,000 employee hours monthly | Business Insider |

| Diagnostic support and predictive analytics | 92% use in predicting patient health trajectories | Master of Code Global |

| Resource management | 10–20% cost savings in operational areas (staffing, schedule) | IntuitionLabs |

| Enhanced diagnostic accuracy | AI diagnostics often exceed traditional performance | TIME |

| Telemedicine and remote patient monitoring | Expanded access and continuous monitoring solutions | arXiv |

Administrative Liberation: Giving Clinicians Back Their Time

One of the most immediate and tangible benefits of AI in healthcare isn’t glamorous, but it’s deeply meaningful to anyone who works in a hospital or clinic: getting rid of paperwork. Consider what 15,000 saved employee hours per month actually means. That’s roughly 500 hours per day—equivalent to having 62 additional full-time employees, but without hiring a single new person.

These hours aren’t just numbers on a spreadsheet. They represent nurses who can spend more time at the bedside instead of clicking through electronic health records. They’re doctors who can look their patients in the eye during consultations rather than typing notes. They’re administrative staff who can focus on helping confused patients navigate the system rather than filling out forms in triplicate.

AI-powered documentation systems now listen to doctor-patient conversations and generate clinical notes automatically. They extract key information from scanned documents and populate databases without human data entry. They route messages, schedule appointments, verify insurance coverage, and handle countless other tasks that used to consume hours of human attention.

Diagnostic Revolution: The AI as Clinical Detective

Perhaps the most celebrated application of AI in medicine is in diagnostics, where machine learning algorithms have demonstrated remarkable—sometimes superhuman—capabilities in pattern recognition. In radiology departments worldwide, AI systems now assist in reading mammograms, chest X-rays, CT scans, and MRIs, often identifying subtle abnormalities that even experienced radiologists might miss.

Take the example of diabetic retinopathy, a leading cause of blindness that can be prevented if caught early. AI systems can now screen retinal images with accuracy matching or exceeding that of ophthalmologists, making it possible to deploy screening programs in underserved areas where specialists are scarce. In some developing countries, a smartphone app with AI-powered analysis is bringing eye care to rural communities that have never had access to an ophthalmologist.

In pathology, AI systems help analyze tissue samples for cancer, reducing the time between biopsy and diagnosis from days to hours. These systems don’t just look at individual cells—they analyze patterns across entire tissue samples, identifying subtle markers that correlate with specific cancer subtypes and predicting which treatments are most likely to be effective.

The 92% adoption rate for predictive analytics in clinical settings represents another dimension of diagnostic support. These systems continuously monitor patient data—vital signs, lab results, medication lists—looking for patterns that suggest developing problems. They can alert clinicians to patients at high risk for sepsis hours before obvious symptoms appear, predict which patients are likely to need intensive care, and identify individuals at risk for hospital-acquired infections.

Resource Management: Making Healthcare More Efficient

Healthcare institutions face constant challenges in managing resources—operating rooms need to be scheduled efficiently, staff levels need to match patient volumes, supplies need to be available but not overstocked, and equipment needs to be maintained before it breaks down.

AI-powered resource management systems are achieving 10-20% cost savings in operational areas, but these savings represent more than just reduced expenses.

Expanding Access Through Telemedicine and Remote Monitoring

The COVID-19 pandemic dramatically accelerated telemedicine adoption, but AI is what’s making remote care sustainable and effective at scale. AI-powered chatbots now handle routine patient questions, freeing up nurses and doctors for complex cases. Remote monitoring systems use machine learning to identify which patients need immediate attention and which are stable, preventing alarm fatigue while ensuring truly urgent situations get rapid response.

For patients with chronic conditions like heart failure or diabetes, AI-enabled home monitoring systems provide continuous oversight that was previously impossible outside a hospital setting. These systems don’t just collect data—they interpret it, identifying concerning trends and alerting healthcare teams before small problems become emergencies. This means fewer hospital admissions, better quality of life for patients, and reduced healthcare costs.

The Human Impact Behind the Statistics

These benefits aren’t just about efficiency and cost savings—they translate into real improvements in human lives. Faster diagnoses mean less time spent in anxious uncertainty. Better resource management means shorter wait times and less chaotic, stressful healthcare experiences. Enhanced diagnostic accuracy means catching diseases earlier when they’re more treatable, or avoiding unnecessary biopsies and procedures when suspicious findings turn out to be benign.

Healthcare workers, too, benefit immensely. Reduced administrative burden means less burnout and more job satisfaction. Better decision support means less fear of missing important diagnoses. More efficient resource management means less chaotic work environments and more predictable schedules.

Key Risks of AI in Healthcare

Every powerful technology brings new risks alongside its benefits, and AI in healthcare is no exception. While the promise of AI is compelling, multiple challenges and concerns persist—and in some cases, the risks are as significant as the benefits are profound. Understanding these risks isn’t about resisting progress; it’s about implementing AI thoughtfully, with eyes wide open to potential pitfalls and unintended consequences.

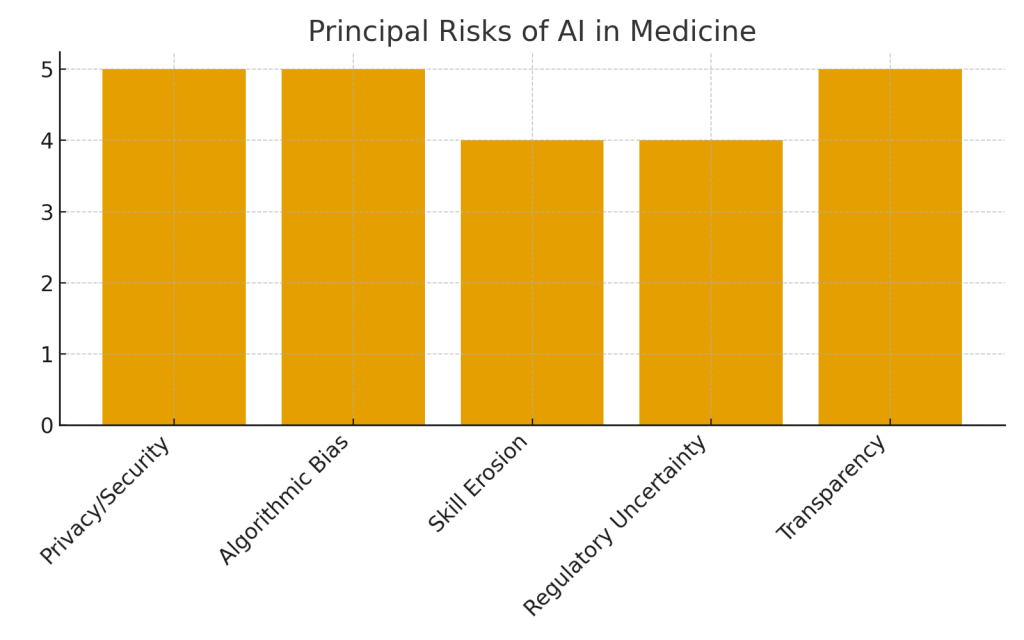

Table 3: Principal Risks and Concerns with AI in Medicine

| Risk Category | Illustrative Data / Concept | Source |

|---|---|---|

| Data privacy and security | AI systems require large medical datasets, raising breach concerns | Berry Solutions Group |

| Algorithmic bias | Potential unfair outcomes due to non-representative training data | i-jmr.org |

| Over-reliance and skill erosion | Routine use may decrease clinicians’ independent diagnostic skills | Financial Times |

| Regulatory and legal uncertainty | Lack of clear AI standards in clinical practice | datascienceforbio.com |

| Transparency and trust | Patients and doctors seek clarity on AI decision logic | keragon.com |

Privacy and Security: Your Medical Data in the Age of AI

When you visit a doctor, you share incredibly intimate information—symptoms you’re embarrassed about, mental health struggles, genetic predispositions to disease, lifestyle choices you’re not proud of. This information is protected by strict privacy laws and professional ethics. But AI systems need vast amounts of data to learn and improve, creating tension between medical privacy and technological advancement.

The concerns aren’t theoretical. Broad deployment of AI increases exposure to cyber risks, particularly when patient data is centralized or shared without robust governance. Large medical databases become attractive targets for hackers—not just because of their value on black markets, but because medical records can be used for identity theft, insurance fraud, or blackmail.

Consider what happens when an AI system is trained on data from millions of patients. That data needs to be collected, stored, transmitted to data centers, processed, and then kept for ongoing model updates and validation. Each step in this process creates potential vulnerabilities. Even when data is “de-identified,” sophisticated re-identification techniques can sometimes link anonymous medical records back to specific individuals, especially when combined with other data sources.

There’s also the question of data sharing across institutions. AI systems work best when trained on diverse, comprehensive datasets, which often means pooling data from multiple hospitals, clinics, and health systems. But every additional entity with access to data increases privacy risks. And once data has been shared for AI training, can patients later withdraw their consent? Can they find out which AI systems have learned from their medical information?

Algorithmic Bias: When AI Amplifies Inequality

One of the most insidious risks of AI in healthcare is the potential for algorithmic bias—when AI systems produce systematically unfair outcomes for certain groups of people. This isn’t about AI being malicious; it’s about AI learning from biased data and perpetuating or even amplifying existing healthcare inequalities.

The problem emerges in multiple ways.

Real-world examples have already emerged. One widely-used algorithm for identifying patients who would benefit from additional medical care was found to systematically underestimate the health needs of Black patients. The algorithm used healthcare costs as a proxy for health needs, but because Black patients had historically received less medical care (and thus generated lower costs), they appeared “healthier” to the algorithm even when they had serious health problems.

The implications are profound. AI systems are supposed to make healthcare more objective and fair, removing human bias from medical decisions. But if we’re not careful, they risk automating and scaling up existing inequities, making them harder to detect and challenge. A biased human doctor might discriminate against a few dozen patients; a biased algorithm deployed across a health system might affect thousands or millions.

Over-Reliance and Skill Erosion: The GPS Effect in Medicine

There’s a phenomenon that anyone who’s used GPS navigation has probably experienced: after relying on turn-by-turn directions for years, you realize you’ve lost the ability to navigate independently. You can no longer read maps confidently, you don’t develop mental models of your city’s geography, and you feel helpless when the GPS stops working.

This “GPS effect” is emerging as a real concern in medicine as AI decision support systems become more prevalent. Early evidence suggests that heavy reliance on AI assists may degrade clinicians’ core diagnostic skills if not balanced with appropriate human oversight and continued practice.

The concern isn’t just hypothetical. In radiology, some younger physicians trained extensively with AI assistance show decreased confidence in their independent interpretations compared to those trained with more traditional methods. In emergency medicine, over-reliance on AI-generated differential diagnoses might lead physicians to stop doing their own thorough clinical reasoning, simply accepting the computer’s suggestions.

Regulatory and Legal Uncertainty: Who’s Responsible When AI Makes Mistakes?

The legal and regulatory framework for AI in healthcare is still evolving, creating significant uncertainty for everyone involved. When an AI system contributes to a medical error, who’s liable—the doctor who followed its recommendation, the hospital that deployed it, the company that created it, or the data scientists who trained it?

Current medical liability law is built around human decision-making. It assumes a licensed professional is making clinical judgments based on their training, experience, and established standards of care. But what’s the “standard of care” when AI is involved? Is a doctor negligent for not using AI assistance? Or for relying too heavily on it? Should physicians be expected to understand how AI algorithms work before using them?

Regulatory approval processes are also struggling to keep pace. Traditional medical devices go through extensive testing before approval, but AI systems can evolve continuously as they’re exposed to new data. Should an AI system that “learns” from patient encounters be re-approved every time it updates? How do we validate systems that may perform differently in different healthcare settings or patient populations?

Transparency and Trust: The Black Box Problem

Trust is fundamental to medicine. Patients trust their doctors with their lives, and doctors trust their diagnostic tools and information sources. But AI threatens to undermine this trust in ways both subtle and profound.

The core problem is opacity. When a doctor recommends surgery, you can ask why, and they can explain their reasoning—what symptoms they observed, what test results concerned them, how your case compares to others they’ve seen. But when an AI system flags you as high-risk for a disease or recommends a particular treatment, explaining the “why” becomes far more difficult.

Many AI systems—particularly those using deep neural networks—are essentially unexplainable even to their creators. They identify patterns in data that humans never explicitly programmed them to look for. They might be extremely accurate, but they can’t tell you why they made a specific prediction in terms humans can understand or evaluate.

The Challenge of Validating AI Across Diverse Populations

Even when AI systems work brilliantly in the settings where they were developed and tested, they may fail when deployed in different contexts. A diagnostic algorithm developed and validated at prestigious academic medical centers in major cities might not work well in rural clinics with different patient populations, different equipment, and different patterns of disease.

This variation creates risk. A hospital might deploy an AI system based on impressive published results, only to discover it performs poorly with their specific patient population. But because AI deployment often happens rapidly and without the extensive individual institutional validation traditional for new medical practices, these failures might not be caught until significant harm has occurred.

Navigating AI Risks: Best Practices from Research and Implementation

The risks of AI in healthcare are real and significant, but they’re not insurmountable. Researchers, clinicians, ethicists, and policy makers are developing comprehensive frameworks to manage these risks thoughtfully and responsibly. The goal isn’t to slow down AI adoption, but to ensure it happens in ways that maximize benefits while minimizing harms.

Key Framework Principles for Responsible AI Implementation

1. Fairness and Bias Mitigation: Representative Data is Essential

The foundation of fair AI is representative data. Systems must be trained on diverse datasets that reflect the full spectrum of patients they’ll eventually serve—different ages, races, ethnicities, socioeconomic backgrounds, and geographic locations. This isn’t just an ethical nice-to-have; it’s a practical necessity for AI systems that actually work for all patients.

2. Explainability: Opening the Black Box

The push for “explainable AI” in healthcare is gaining momentum. Rather than accepting AI systems that work but can’t explain themselves, researchers are developing approaches that provide interpretable insights into AI decision-making.

3. Strong Data Governance: Protecting Privacy While Enabling Progress

Balancing the data needs of AI with patient privacy requires robust governance frameworks. Leading healthcare organizations are implementing strict data protection standards in line with regulations like HIPAA and GDPR, while also creating review boards specifically focused on AI and data ethics.

4. Human-in-the-Loop: AI as Assistant, Not Replacement

Perhaps the most important principle is ensuring clinicians remain actively engaged in medical decision-making rather than simply deferring to AI recommendations. This “human-in-the-loop” approach treats AI as a powerful assistant that augments human capabilities rather than replacing human judgment.

5. Continuous Monitoring and Evaluation

AI systems in healthcare require ongoing monitoring in ways traditional medical tools don’t. Because AI can evolve, because it may perform differently in different settings, and because subtle problems might emerge only after widespread use, continuous evaluation is essential.

Forward-thinking institutions are creating AI monitoring systems that track performance metrics over time, looking for signs of degradation, bias, or unexpected failures. They’re establishing incident reporting systems specifically for AI-related issues. They’re conducting regular audits to ensure AI systems continue to perform as expected across all patient populations.

Building Trust Through Transparency and Communication

Beyond technical safeguards, responsible AI implementation requires open communication with patients and the public. Healthcare organizations are beginning to develop clear policies about how and when AI is used in patient care, with some creating patient-facing explanations of AI systems and explicit opt-out options for patients who prefer purely human decision-making.

This transparency extends to being honest about limitations and uncertainties. Rather than overselling AI capabilities or hiding its role in care decisions, leading institutions are finding that patients appreciate honesty about both AI’s strengths and its limitations. Many patients are actually enthusiastic about AI assistance when it’s explained clearly, seeing it as an additional layer of safety and expertise rather than a replacement for human care.

The Path Forward: Realizing AI’s Promise Responsibly

The transformation of healthcare through AI is not a future possibility—it’s unfolding right now, in hospitals and clinics around the world. The data makes clear that AI offers genuine, measurable benefits: time saved, costs reduced, diagnoses improved, lives extended. But the same data reveals significant risks that demand careful attention and thoughtful mitigation.

What Healthcare Leaders Must Do

For healthcare executives and administrators, the challenge is implementing AI at scale while maintaining patient safety, privacy, and trust. This requires:

- Strategic patience: Resist the temptation to rush AI deployment just because competitors are doing so. Take time to validate systems properly and ensure appropriate safeguards are in place.

- Investment in infrastructure: Beyond the AI systems themselves, invest in the data governance, monitoring systems, and training programs needed to use AI responsibly.

- Cultural change: Foster organizational cultures where it’s safe to question AI recommendations, report AI-related concerns, and maintain human judgment alongside technological assistance.

What Clinicians Must Do

For doctors, nurses, and other healthcare providers, AI brings both opportunities and obligations:

- Embrace appropriate skepticism: Use AI tools actively, but maintain your own clinical reasoning and be willing to override AI when your judgment suggests it’s wrong.

- Advocate for your patients: Question AI systems that don’t make sense, push for explainability, and ensure patients understand when AI is influencing their care.

- Maintain and develop skills: Consciously practice diagnostic and clinical reasoning skills even when AI assistance is available, ensuring you could still function effectively if technology failed.

What Patients Can Do

For patients and families navigating healthcare systems increasingly influenced by AI:

- Ask questions: Don’t hesitate to ask whether AI is being used in your care and how it’s influencing treatment decisions. Healthcare providers should be able to explain this clearly.

- Stay informed: Understand both the benefits and limitations of AI in healthcare. Be neither blindly trusting nor reflexively suspicious.

- Provide feedback: When you have concerns about AI in your healthcare experience—or when you see it working well—share that feedback with your providers and healthcare institutions.

The Bigger Picture: AI in Healthcare as a Social Choice

Ultimately, how AI transforms healthcare isn’t predetermined by technology. It’s shaped by choices we make—as a society, as healthcare institutions, as medical professionals, and as patients.

The most important thing to remember is that AI in healthcare is not about replacing the human elements of medicine—the empathy, the clinical judgment, the patient-doctor relationship. At its best, AI handles the tasks computers do well—processing vast amounts of data, recognizing complex patterns, automating routine work—freeing humans to focus on the things we do best: connecting with patients, applying nuanced judgment to complex situations, and providing compassionate care.

Conclusion: A Balanced Vision for AI in Healthcare

The data is clear: AI’s transformative potential in healthcare is backed by strong market growth and clinical evidence, from administrative efficiency gains that give clinicians back thousands of hours to predictive analytics that improve patient outcomes and save lives. These aren’t distant promises but present realities, measurable and documented across healthcare systems worldwide.

Yet the measured risks—including privacy vulnerabilities, algorithmic bias, potential skill erosion, regulatory gaps, and transparency challenges—demand equal attention. These risks aren’t reasons to reject AI, but they are compelling arguments for proceeding thoughtfully, with robust governance, continuous monitoring, and unwavering commitment to patient welfare.

Credible Resources & Further Reading

✔ European Commission – AI in Healthcare Overview

https://health.ec.europa.eu/ehealth-digital-health-and-care/artificial-intelligence-healthcare_en

✔ PubMed Central – Adoption of AI in U.S. Health Systems

https://pmc.ncbi.nlm.nih.gov/articles/PMC12202002/

✔ The Lancet Digital Health

Leading medical journal focused on digital health technologies including AI applications and evaluation